May 12, 2017

Kafka Consumer Architecture - Consumer Groups and subscriptions

This article covers some lower level details of Kafka consumer architecture. It is a continuation of the Kafka Architecture, Kafka Topic Architecture, and Kafka Producer Architecture articles.

This article covers Kafka Consumer Architecture with a discussion consumer groups and how record processing is shared among a consumer group as well as failover for Kafka consumers.

Cloudurable provides Kafka training, Kafka consulting, Kafka support and helps setting up Kafka clusters in AWS.

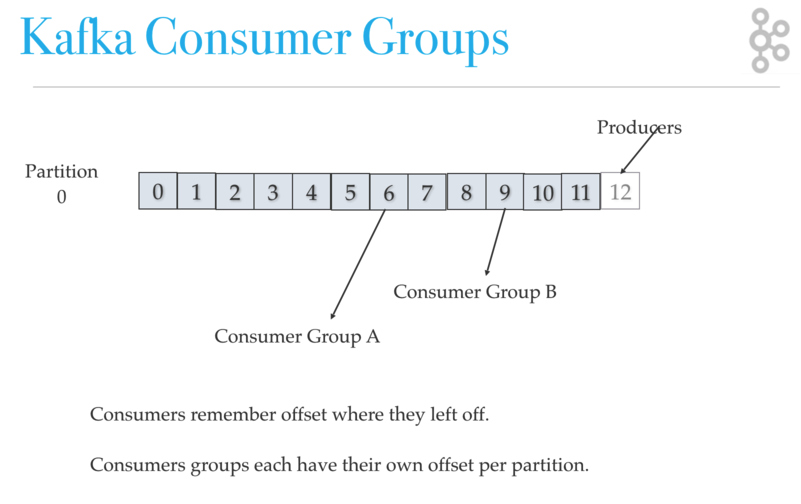

Kafka Consumer Groups

You group consumers into a consumer group by use case or function of the group. One consumer group might be responsible for delivering records to high-speed, in-memory microservices while another consumer group is streaming those same records to Hadoop. Consumer groups have names to identify them from other consumer groups.

A consumer group has a unique id. Each consumer group is a subscriber to one or more Kafka topics. Each consumer group maintains its offset per topic partition. If you need multiple subscribers, then you have multiple consumer groups. A record gets delivered to only one consumer in a consumer group.

Each consumer in a consumer group processes records and only one consumer in that group will get the same record. Consumers in a consumer group load balance record processing.

Kafka Architecture: Kafka Consumer Groups

Consumers remember offset where they left off reading. Consumers groups each have their own offset per partition.

Kafka Consumer Load Share

Kafka consumer consumption divides partitions over consumer instances within a consumer group. Each consumer in the consumer group is an exclusive consumer of a “fair share” of partitions. This is how Kafka does load balancing of consumers in a consumer group. Consumer membership within a consumer group is handled by the Kafka protocol dynamically. If new consumers join a consumer group, it gets a share of partitions. If a consumer dies, its partitions are split among the remaining live consumers in the consumer group. This is how Kafka does fail over of consumers in a consumer group.

Kafka Consumer Failover

Consumers notify the Kafka broker when they have successfully processed a record, which advances the offset.

If a consumer fails before sending commit offset to Kafka broker, then a different consumer can continue from the last committed offset.

If a consumer fails after processing the record but before sending the commit to the broker, then some Kafka records could be reprocessed. In this scenario, Kafka implements the at least once behavior, and you should make sure the messages (record deliveries ) are idempotent.

Offset management

Kafka stores offset data in a topic called "__consumer_offset". These topics

use log compaction, which means they only save the most recent value per key.

When a consumer has processed data, it should commit offsets. If consumer process

dies, it will be able to start up and start reading where it left off based on

offset stored in "__consumer_offset" or as discussed another consumer in the

consumer group can take over.

What can Kafka consumers see?

What records can be consumed by a Kafka consumer?

Consumers can’t read un-replicated data.

Kafka consumers can only consume

messages beyond the “High Watermark” offset of the partition.

“Log end offset” is offset of the last record written to log partition and where producers writes to next.

“High Watermark” is the offset of the last record that was successfully replicated to all partition’s followers.

Consumer only reads up to the “High Watermark”.

Consumer to Partition Cardinality - Load sharing redux

Only a single consumer from the same consumer group can access a single partition. If consumer group count exceeds the partition count, then the extra consumers remain idle. Kafka can use the idle consumers for failover. If there are more partitions than consumer group, then some consumers will read from more than one partition.

Kafka Architecture: Consumer Group Consumers to Partitions

Notice server 1 has topic partition P2, P3, and P4 while server 2 has partition P0, P1, and P5. Notice that Consumer C0 from Consumer Group A is processing records from P0 and P2. Notice that no single partition is shared by any consumer from any consumer group. Notice that each partition gets its fair share of partitions for the topics.

Multi-threaded Kafka Consumers

You can run more than one Consumer in a JVM process by using threads.

Consumer with many threads

If processing a record takes a while, a single Consumer can run multiple threads to process records, but it is harder to manage offset for each Thread/Task. If one consumer runs multiple threads, then two messages on the same partitions could be processed by two different threads which make it hard to guarantee record delivery order without complex thread coordination. This setup might be appropriate if processing a single task takes a long time, but try to avoid it.

Thread per consumer

If you need to run multiple consumers, then run each consumer in their own thread. This way Kafka can deliver record batches to the consumer and the consumer does not have to worry about the offset ordering. A thread per consumer makes it easier to manage offsets. It is also simpler to manage failover (each process runs X num of consumer threads) as you can allow Kafka to do the brunt of the work.

Kafka Consumer Review

What is a consumer group?

A consumer group is a group of related consumers that perform a task, like putting data into Hadoop or sending messages to a service. Consumer groups each have unique offsets per partition. Different consumer groups can read from different locations in a partition.

Does each consumer group have its own offset?

Yes. The consumer groups have their own offset for every partition in the topic which is unique to what other consumer groups have.

When can a consumer see a record?

A consumer can see a record after the record gets fully replicated to all followers.

What happens if there are more consumers than partitions?

The extra consumers remain idle until another consumer dies.

What happens if you run multiple consumers in many threads in the same JVM?

Each thread manages a share of partitions for that consumer group.

Related content

- What is Kafka?

- [Kafka Architecture](https://cloudurable.com/blog/kafka-architecture/index.html “This article discusses the structure of Kafka. Kafka consists of Records, Topics, Consumers, Producers, Brokers, Logs, Partitions, and Clusters. Records can have key, value and timestamp. Kafka Records are immutable. A Kafka Topic is a stream of records - “/orders”, “/user-signups”. You can think of a Topic as a feed name. It covers the structure of and purpose of topics, log, partition, segments, brokers, producers, and consumers”)

- Kafka Topic Architecture

- Kafka Consumer Architecture

- Kafka Producer Architecture

- Kafka Architecture and low level design

- Kafka and Schema Registry

- Kafka and Avro

- Kafka Ecosystem

- Kafka vs. JMS

- Kafka versus Kinesis

- Kafka Tutorial: Using Kafka from the command line

- Kafka Tutorial: Kafka Broker Failover and Consumer Failover

- Kafka Tutorial

- Kafka Tutorial: Writing a Kafka Producer example in Java

- Kafka Tutorial: Writing a Kafka Consumer example in Java

- Kafka Architecture: Log Compaction

- Kafka Architecture: Low-Level PDF Slides

About Cloudurable

We hope you enjoyed this article. Please provide feedback. Cloudurable provides Kafka training, Kafka consulting, Kafka support and helps setting up Kafka clusters in AWS.

Check out our new GoLang course. We provide onsite Go Lang training which is instructor led.

TweetApache Spark Training

Kafka Tutorial

Akka Consulting

Cassandra Training

AWS Cassandra Database Support

Kafka Support Pricing

Cassandra Database Support Pricing

Non-stop Cassandra

Watchdog

Advantages of using Cloudurable™

Cassandra Consulting

Cloudurable™| Guide to AWS Cassandra Deploy

Cloudurable™| AWS Cassandra Guidelines and Notes

Free guide to deploying Cassandra on AWS

Kafka Training

Kafka Consulting

DynamoDB Training

DynamoDB Consulting

Kinesis Training

Kinesis Consulting

Kafka Tutorial PDF

Kubernetes Security Training

Redis Consulting

Redis Training

ElasticSearch / ELK Consulting

ElasticSearch Training

InfluxDB/TICK Training TICK Consulting